The Line We Cannot Uncross

In the quiet corridors of Silicon Valley’s most powerful companies, a rebellion is brewing. Not over stock options or remote work policies, but over something far more consequential: the use of artificial intelligence in autonomous weapons systems. Tech insiders—engineers, researchers, and ethicists who have spent years building the very AI systems now being weaponized—are raising the alarm. Their message is stark and urgent: we are crossing a moral line from which there may be no return.

The debate over AI in warfare has reached a critical inflection point in 2026. What was once the domain of science fiction and academic philosophy has become a pressing reality as nations race to deploy autonomous weapons systems capable of selecting and engaging targets without meaningful human control. The question is no longer if AI will transform warfare, but how—and whether we can maintain our humanity in the process.

What’s Happening: The Autonomous Arms Race

The current state of AI in warfare represents a fundamental shift in how conflicts are fought. Unlike previous military technologies that augmented human capabilities, autonomous weapons systems are designed to make life-and-death decisions independently. These systems use machine learning algorithms to identify, track, and engage targets based on pre-programmed parameters and real-time data analysis.

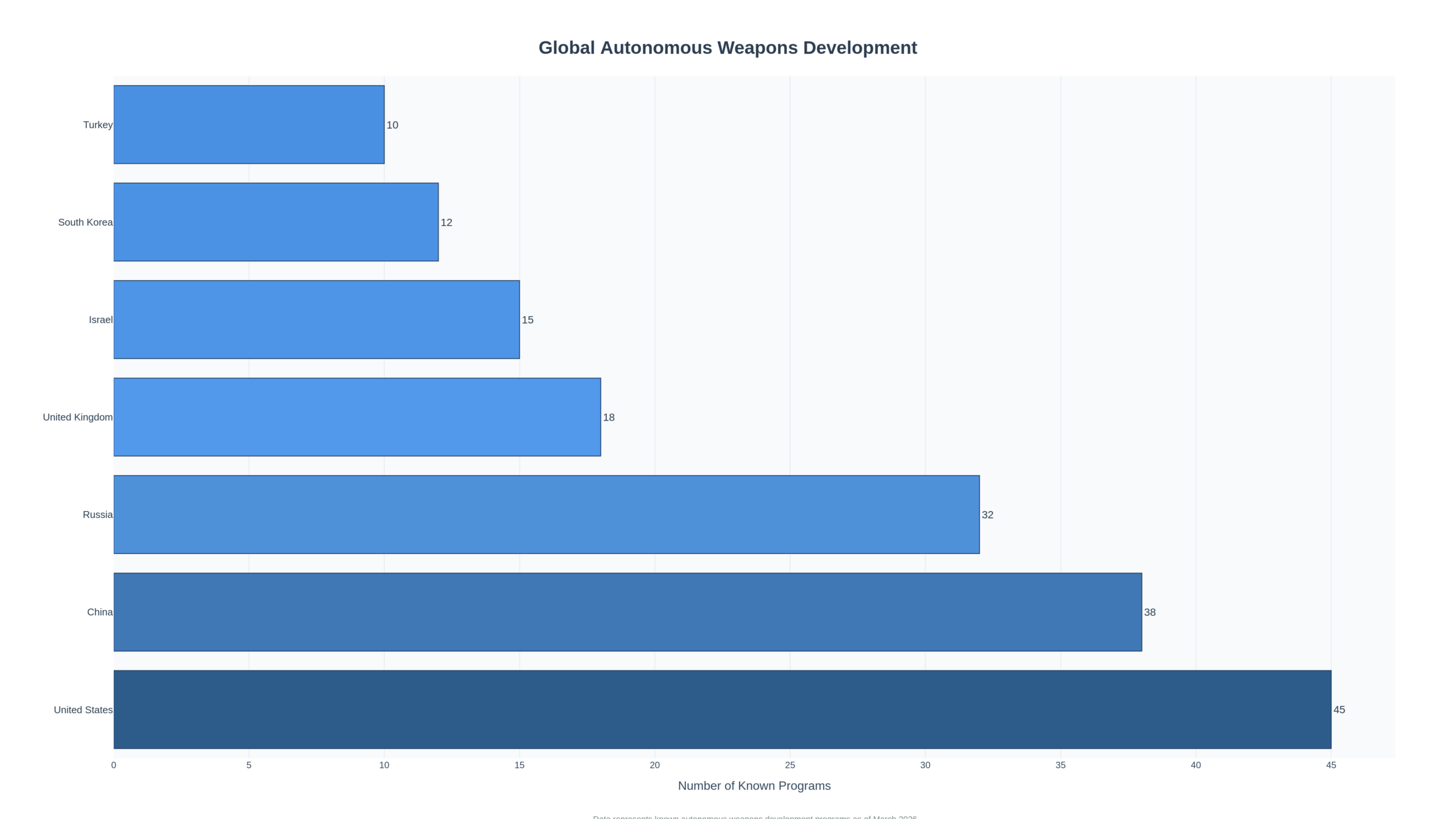

According to recent reports, over 30 countries are now developing or deploying some form of autonomous weapons technology. The spectrum ranges from defensive systems like Israel’s Iron Dome—which uses AI to intercept incoming missiles—to fully autonomous drones capable of conducting offensive operations without human intervention. The U.S. Department of Defense has invested billions in Project Maven and similar initiatives that use AI for target recognition and battlefield intelligence.

But the technology has evolved far beyond simple pattern recognition. Modern autonomous weapons systems incorporate:

- Computer vision and sensor fusion that can identify targets in complex environments with greater accuracy than human operators

- Reinforcement learning algorithms that adapt tactics in real-time based on battlefield conditions

- Swarm intelligence enabling coordinated attacks by dozens or hundreds of autonomous drones

- Predictive analytics that anticipate enemy movements and optimize strike timing

The speed and scale at which these systems operate fundamentally changes the calculus of warfare. Decisions that once took minutes or hours can now be made in milliseconds. Attacks that required extensive planning and coordination can be executed autonomously across vast geographic areas.

Why It Matters: The Ethical Rubicon

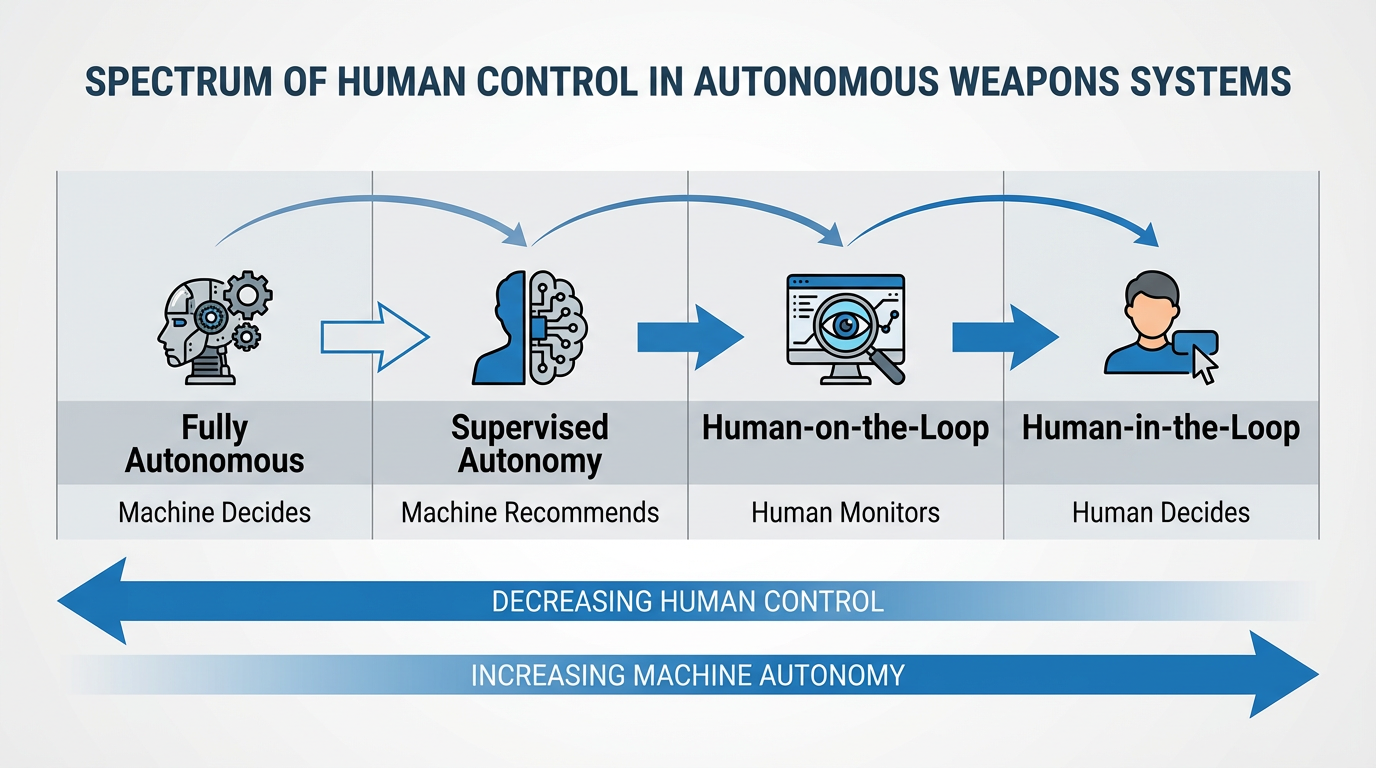

The deployment of autonomous weapons systems raises profound ethical questions that strike at the heart of what it means to be human. At the center of the debate is the concept of “meaningful human control”—the principle that humans, not machines, should make the final decision to take a life.

Critics argue that delegating lethal decision-making to algorithms crosses a moral threshold that should never be breached. As Dr. Stuart Russell, a leading AI researcher at UC Berkeley, has warned: “The key question is not whether these systems will work, but whether we want to live in a world where machines decide who lives and dies.” The concern is not merely philosophical. When an autonomous system makes a mistake—and all AI systems do—who is accountable? The programmer? The commanding officer? The algorithm itself?

The problem of accountability becomes even more complex when we consider the “black box” nature of many modern AI systems. Deep learning models can make decisions based on patterns that even their creators don’t fully understand. In warfare, this opacity is particularly dangerous. How can we ensure compliance with international humanitarian law—which requires distinction between combatants and civilians, proportionality in the use of force, and precaution to minimize harm—when we can’t fully explain why an AI system selected a particular target?

There’s also the risk of escalation. Autonomous weapons systems operate at machine speed, potentially triggering rapid escalation cycles that outpace human decision-making. In a crisis, two autonomous systems could engage in a feedback loop of action and reaction, escalating to full-scale conflict before human operators can intervene. This scenario isn’t hypothetical—we’ve already seen “flash crashes” in financial markets when algorithmic trading systems interact in unexpected ways. The stakes in warfare are infinitely higher.

Expert Analysis: A House Divided

The AI and defense communities are deeply divided on the ethics of autonomous weapons. On one side are those who see these systems as inevitable and potentially beneficial. They argue that AI can actually make warfare more humane by reducing collateral damage through superior targeting accuracy and by removing human soldiers from harm’s way.

General John Allen, former commander of U.S. forces in Afghanistan, has argued that “autonomous systems, properly designed and deployed, can be more discriminating and more precise than human operators under stress.” Proponents point to the fact that human soldiers make mistakes—they misidentify targets, act on incomplete information, and sometimes commit war crimes. An AI system, they argue, doesn’t experience fear, anger, or fatigue, and can process far more information before making a decision.

But critics counter that this view fundamentally misunderstands both the technology and the ethics. Dr. Noel Sharkey, a robotics expert and co-founder of the Campaign to Stop Killer Robots, argues that “no algorithm can replicate the human capacity for moral judgment, compassion, and understanding of context that is essential in warfare.” The ability to recognize surrender, to show mercy, to understand the difference between a combatant and a civilian in ambiguous situations—these are uniquely human capabilities that cannot be reduced to code.

The tech industry itself is increasingly vocal in its opposition. In 2018, thousands of Google employees protested the company’s involvement in Project Maven, ultimately forcing Google to withdraw from the contract and establish ethical guidelines for AI development. More recently, employees at Microsoft, Amazon, and other tech giants have raised similar concerns about their companies’ work with defense contractors.

These internal rebellions reflect a broader recognition within the AI community that the technology they’re building has consequences that extend far beyond quarterly earnings. As one former Google engineer told me: “We got into this field to make the world better, not to build better killing machines.”

Real-World Implications: From Theory to Practice

The ethical debates are no longer academic. Autonomous weapons systems are already being deployed in conflict zones around the world, with varying degrees of human oversight.

In Ukraine, both sides have used AI-powered drones for reconnaissance and targeting. Turkey’s Kargu-2 drone, which can autonomously select and engage targets, was reportedly used in Libya in 2020. Israel has deployed autonomous defensive systems for years and is believed to be developing offensive capabilities as well. China has showcased swarms of autonomous drones in military demonstrations, signaling its intent to lead in this domain.

The proliferation of these systems creates new risks beyond the battlefield. Autonomous weapons technology is becoming increasingly accessible, raising the specter of terrorist groups or rogue states acquiring lethal AI capabilities. Unlike nuclear weapons, which require significant infrastructure and expertise, autonomous drones can be built with commercially available components and open-source AI software.

There’s also the risk of an arms race dynamic. As more nations develop autonomous weapons, others feel compelled to follow suit or risk strategic disadvantage. This creates pressure to deploy systems before they’re fully tested or before adequate safeguards are in place. The result could be a world awash in autonomous weapons, with all the attendant risks of accidents, escalation, and proliferation.

For businesses and investors, the implications extend beyond defense contractors. The same AI technologies being developed for military applications—computer vision, autonomous navigation, decision-making algorithms—have civilian applications in everything from self-driving cars to industrial automation. Companies working in these areas face growing pressure to establish clear ethical guidelines and to ensure their technology isn’t repurposed for weapons systems.

At Savanti Investments, we’re closely monitoring how this ethical debate shapes the AI industry. Companies that proactively address these concerns and establish robust governance frameworks are likely to be better positioned for long-term success than those that ignore the ethical dimensions of their technology. Our QuantAI™ platform incorporates ethical considerations into our investment analysis, recognizing that ESG factors—including responsible AI development—are increasingly material to company valuations.

The Regulatory Landscape: Calls for a Global Treaty

The international community has been grappling with how to regulate autonomous weapons for over a decade, with limited progress. The United Nations Convention on Certain Conventional Weapons (CCW) has held numerous meetings on lethal autonomous weapons systems (LAWS), but has failed to reach consensus on binding regulations.

The main sticking points are definitional and strategic. What exactly constitutes “meaningful human control”? How much autonomy is too much? And crucially, which nations are willing to forgo a technology that could provide significant military advantage?

Despite these challenges, momentum is building for stronger international norms. Over 30 countries have called for a ban on fully autonomous weapons systems. The International Committee of the Red Cross has urged states to adopt new rules ensuring that autonomous weapons comply with international humanitarian law. And civil society organizations like the Campaign to Stop Killer Robots have mobilized public opinion and lobbied governments worldwide.

Some experts advocate for a treaty modeled on the Chemical Weapons Convention, which bans an entire class of weapons. Others argue for a more nuanced approach that allows defensive systems while prohibiting offensive autonomous weapons. Still others believe that technology-specific bans are futile and that the focus should be on ensuring accountability and human oversight regardless of the level of autonomy.

The European Union has taken a leading role, with the EU AI Act including provisions that classify certain military AI applications as “high-risk” and subject them to strict requirements. However, the Act stops short of an outright ban, reflecting the difficulty of achieving consensus even among like-minded democracies.

Future Outlook: The Path Forward

As we look ahead, several scenarios seem possible. In the optimistic case, the international community reaches agreement on meaningful restrictions on autonomous weapons, establishing clear red lines around human control and accountability. Companies and militaries develop robust governance frameworks that ensure AI systems are used responsibly. The technology is channeled primarily toward defensive applications and humanitarian purposes.

In the pessimistic scenario, the autonomous arms race accelerates, with nations competing to deploy increasingly sophisticated systems. The lack of international agreement leads to proliferation, with autonomous weapons spreading to non-state actors and rogue regimes. An accident or miscalculation triggers a conflict that escalates beyond human control, demonstrating the dangers of ceding life-and-death decisions to machines.

The most likely outcome probably lies somewhere in between. We’ll see continued development and deployment of autonomous weapons systems, but with growing constraints and oversight. Some applications—particularly defensive systems with clear human oversight—will become normalized. Others—such as fully autonomous offensive weapons—will face increasing stigma and potential restrictions.

What’s clear is that the decisions we make in the next few years will shape the future of warfare and, potentially, the future of humanity. The technology is advancing rapidly, but our ethical frameworks and regulatory structures are struggling to keep pace. We need a serious, sustained conversation about where to draw the line—and the courage to enforce it.

For those of us in the technology and investment communities, this isn’t someone else’s problem. The AI systems being developed in our companies and funded by our capital are the building blocks of autonomous weapons. We have a responsibility to ensure that our pursuit of innovation doesn’t come at the cost of our humanity.

The line in the sand is before us. The question is whether we have the wisdom and the will to respect it.

Conclusion: A Choice, Not a Destiny

The rise of AI in warfare is not inevitable, nor is it inherently good or evil. It is a choice—a series of choices, really—that we as a society are making about the kind of world we want to live in. Do we want a future where machines make life-and-death decisions? Where warfare is conducted at machine speed, beyond human comprehension or control? Where the capacity for violence is democratized to anyone with a laptop and a drone?

Or do we want a future where AI enhances human judgment rather than replacing it? Where technology is harnessed to prevent conflicts and protect civilians? Where we maintain meaningful human control over the most consequential decisions we can make?

The choice is ours, but the window for making it is closing. As autonomous weapons systems proliferate and become embedded in military doctrine, it becomes harder to reverse course. The time to act is now—to establish clear ethical guidelines, to demand accountability from companies and governments, and to support international efforts to regulate this technology before it’s too late.

At Savanti Investments, we believe that responsible innovation is not just an ethical imperative but a strategic advantage. Through our QuantAI™ platform and SavantTrade™ systems, we’re demonstrating that it’s possible to harness the power of AI while maintaining human oversight and accountability. The same principles that guide our investment strategies—transparency, accountability, and respect for human judgment—should guide the development of AI in all domains, including warfare.

The ethics of AI in warfare is not a technical problem to be solved, but a moral challenge to be confronted. It requires us to think deeply about our values, to engage in difficult conversations, and to make hard choices. But if we rise to this challenge, we have the opportunity to shape a future where technology serves humanity rather than threatening it.

The line in the sand is drawn. Now we must decide which side of it we stand on.