The race to build the world’s most powerful artificial intelligence has entered a new phase—and the rules have changed. On March 5, 2026, OpenAI unveiled GPT-5.4, a model that doesn’t just answer questions but can operate your computer. Days earlier, Google countered with Gemini 3.1 Flash-Lite, a lightning-fast model that processes information at 381 tokens per second while costing a fraction of its competitors. These aren’t incremental updates. They’re strategic repositionings in an arms race that’s no longer about who builds the biggest model, but who builds the right model for the right job.

For business leaders, investors, and technologists, the implications are profound. The era of the monolithic, one-size-fits-all AI is over. Welcome to the age of specialized intelligence—where success depends not on having the single “best” model, but on orchestrating a portfolio of AIs, each optimized for specific tasks, costs, and performance requirements.

The Computer Use Revolution: GPT-5.4’s Killer Feature

Let’s start with what makes GPT-5.4 genuinely unprecedented. While previous models could describe how to use software, GPT-5.4 can actually do it. Through what OpenAI calls “native computer use,” the model interacts with desktop applications via screenshots, mouse movements, and keyboard commands—no specialized APIs required.

The benchmark numbers tell the story: GPT-5.4 achieved a 75.0% success rate on the OSWorld-Verified test, which measures autonomous completion of real-world desktop tasks. That’s higher than the human expert baseline of 72.4%. In practical terms, this means the model can navigate spreadsheets, scrape data from websites, create presentations, and even draw logos in Microsoft Paint—all without human intervention.

I’ve watched demos where GPT-5.4 reverse-engineered NES ROM files and automated complex financial modeling workflows. This isn’t a parlor trick. It’s a fundamental shift in what AI can do. We’re moving from AI as a content generator to AI as a digital workforce.

The model also brings a 1 million token context window to its API and Codex versions, enabling it to process massive documents or maintain state across long-running agentic tasks. A new “Tool Search” feature dynamically loads tool definitions only when needed, reducing token consumption in tool-heavy workflows by up to 47%. For enterprises building autonomous agents, these aren’t just nice-to-haves—they’re the infrastructure that makes complex automation viable.

Speed vs. Power: Google’s Strategic Counter

While OpenAI pushes the boundaries of what AI can do, Google is redefining what AI can do at scale. Gemini 3.1 Flash-Lite, released March 3, is Google’s answer to a different question: How do you make frontier-level intelligence accessible for high-volume, cost-sensitive applications?

The answer is speed and efficiency. At $0.25 per million input tokens and $1.50 per million output tokens, Gemini 3.1 Flash-Lite is one of the most affordable frontier models on the market. But affordability means nothing if performance suffers—and here’s where Google’s engineering shines. The model generates output at approximately 381 tokens per second, with a Time to First Answer Token (TTFT) that’s 2.5x faster than Gemini 2.5 Flash.

What makes this particularly clever is the model’s “adaptive intelligence” feature. Developers can select from “minimal,” “low,” “medium,” or “high” thinking levels, dynamically trading response quality for speed. This means a single model can serve everything from real-time chatbots to complex analytical tasks—without switching endpoints or managing multiple deployments.

As a natively multimodal model, Gemini 3.1 Flash-Lite processes text, code, images, audio, video, and PDFs. Its 1 million token context window can handle up to 45 minutes of video in a single request. For applications like content moderation, video analysis, or dynamic UI generation, this combination of speed, multimodality, and cost-efficiency is game-changing.

The End of the “Best Model” Era

Here’s the uncomfortable truth that many in the AI community are still grappling with: there is no longer a single “best” AI model. The March 2026 landscape is multipolar, with different models excelling in different domains.

GPT-5.4 dominates professional knowledge work, scoring 83.0% on the GDPval benchmark, which measures performance across 44 real-world occupations. It matches or exceeds human-level performance in the vast majority of tested tasks. For high-stakes enterprise applications—legal document analysis, financial modeling, strategic planning—it’s the premium choice.

But Anthropic’s Claude Opus 4.6 leads on the SWE-Bench coding benchmark and is often preferred for its nuanced, human-like writing style. Gemini 3.1 Pro (the full version, not Flash-Lite) excels in abstract reasoning. And emerging challengers like xAI’s Grok 4.20 Beta—with its 2 million token context window and claimed lowest hallucination rate—are pushing the boundaries even further.

Meanwhile, DeepSeek’s models offer frontier-level performance at dramatically lower costs, and Meta’s open-source Llama series enables rapid community-driven innovation without vendor lock-in.

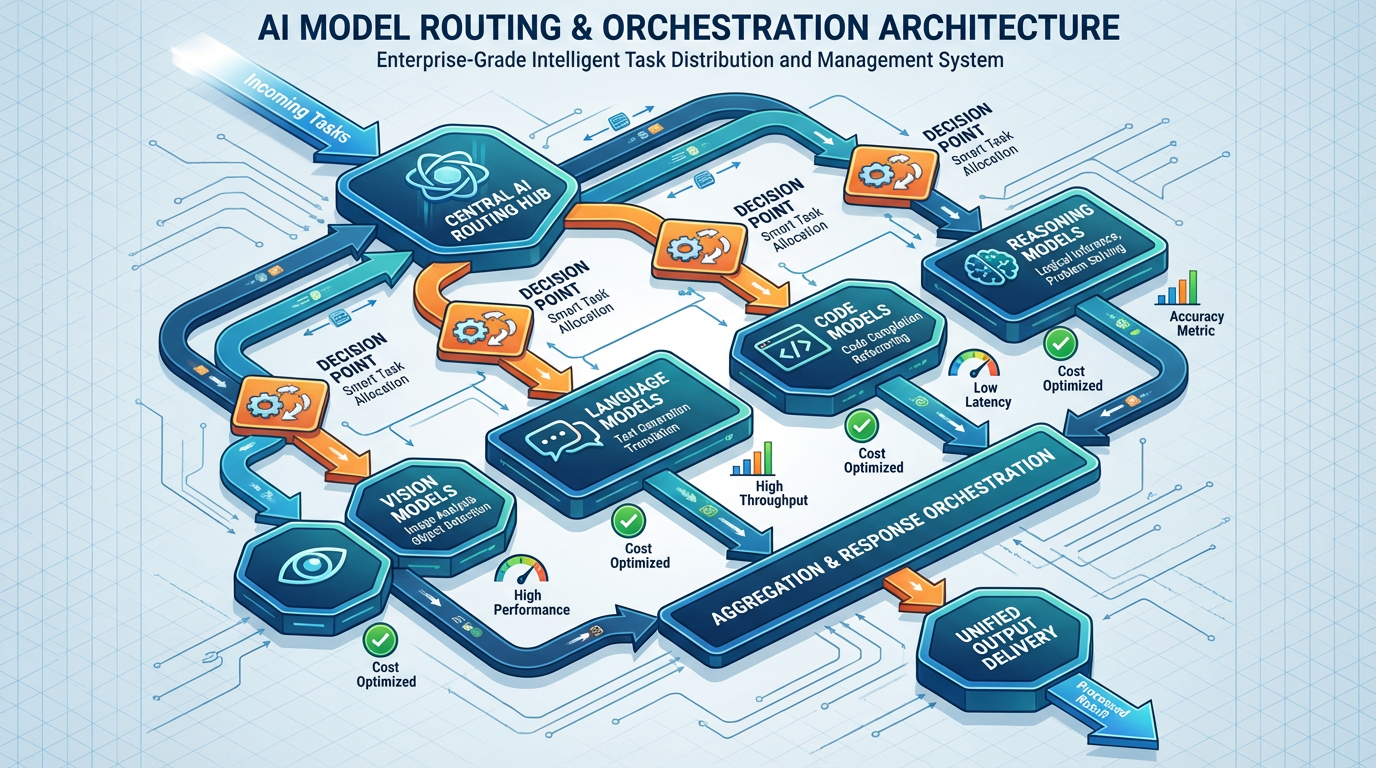

The strategic implication? Model routing is the new competitive advantage. The most sophisticated AI deployments in 2026 don’t rely on a single model. They orchestrate multiple specialized AIs, routing tasks to the most appropriate model based on complexity, cost, and latency requirements.

What This Means for Business Strategy

At Savanti Investments, we’ve been tracking this shift closely, particularly its implications for quantitative finance and algorithmic trading. The move from monolithic to specialized AI architectures mirrors a broader trend we’re seeing across industries: the unbundling of intelligence.

For enterprises, the practical playbook looks like this:

1. Build a Model Routing Architecture

Use cost-effective models like Gemini 3.1 Flash-Lite as your “execution layer” for high-volume, simple tasks—data classification, sentiment analysis, basic customer service. Escalate to more powerful (and expensive) models like GPT-5.4 only when complex reasoning or high-fidelity execution is required. This cascading approach can reduce AI costs by 60-80% while maintaining quality.

2. Invest in Agentic Infrastructure

GPT-5.4’s computer use capabilities aren’t just a feature—they’re a preview of the next generation of enterprise software. Companies that build robust agentic workflows now will have a significant advantage as these capabilities mature. Think beyond chatbots. Think autonomous financial analysts, legal researchers, and marketing strategists.

3. Embrace Multimodal Intelligence

The ability to process video, audio, images, and text in a single context window opens entirely new application categories. For financial services, this means analyzing earnings calls, investor presentations, and market sentiment simultaneously. For retail, it means understanding customer behavior across every touchpoint. The companies that figure out how to leverage this multimodal intelligence first will define the next decade of competitive advantage.

4. Prepare for the Orchestration Layer

As the model ecosystem grows more complex, the real value will shift to the orchestration layer—the platforms and tools that manage model routing, performance monitoring, and cost optimization. This is where we’re seeing significant investment opportunities, particularly in the infrastructure that enables enterprises to deploy AI at scale without drowning in complexity.

The Bigger Picture: Where This Is Heading

The GPT-5.4 vs. Gemini 3.1 Flash-Lite dynamic isn’t just about two models. It’s a microcosm of the broader AI landscape in 2026—a landscape defined by specialization, competition, and the relentless push toward practical, deployable intelligence.

Several trends are accelerating:

The Agentic AI Race: Competition is shifting from pure reasoning benchmarks to agentic capabilities—the ability to autonomously plan and execute complex, long-running tasks. Performance on benchmarks like OSWorld (computer use) and BridgeBench (multi-agent coordination) will become the new standard for measuring AI capability.

Context Window Expansion: The “context window war” continues. xAI’s Grok 4.20, with its 2 million token context, signals that multi-million token windows will become standard. This enables AI to comprehend entire codebases, legal archives, or research libraries in a single pass—fundamentally changing how we think about AI’s role in knowledge work.

The Open vs. Closed Debate: The tension between closed-source models (OpenAI, Google) and open-source alternatives (Meta’s Llama, DeepSeek) is intensifying. Closed-source ensures quality control and clear monetization but creates vendor lock-in risks. Open-source fosters innovation and customization but requires more technical sophistication to deploy effectively. Most enterprises will end up using both.

Compute as Competitive Moat: The development of frontier models is fundamentally constrained by access to massive data centers and specialized AI hardware. Google’s proprietary TPU infrastructure and OpenAI’s partnership with Microsoft for Azure and NVIDIA GPUs create structural advantages that are difficult to replicate. This compute war will determine which companies can sustain the pace of innovation.

The Savanti Perspective: Intelligence as Infrastructure

At Savanti Investments, we’ve built our QuantAI™ platform on the principle that the future of finance isn’t about replacing human judgment with AI—it’s about augmenting human intelligence with specialized AI systems that excel at specific tasks. Our SavantTrade™ algorithmic trading infrastructure already uses a model routing approach, deploying different AI architectures for market sentiment analysis, risk assessment, and trade execution.

The GPT-5.4 and Gemini 3.1 Flash-Lite releases validate this strategy. The winners in the AI era won’t be those who bet on a single model or vendor. They’ll be those who build flexible, orchestrated systems that can adapt as the technology evolves.

For our portfolio companies and clients, the message is clear: Start building your AI orchestration layer now. Experiment with multiple models. Understand their strengths and weaknesses. Build the infrastructure to route tasks intelligently. The companies that master this orchestration will have a decisive advantage as AI capabilities continue to accelerate.

Looking Forward

The AI arms race of 2026 isn’t about who builds the biggest model. It’s about who builds the most effective ecosystem of models. OpenAI’s GPT-5.4 demonstrates the power of agentic, computer-operating AI. Google’s Gemini 3.1 Flash-Lite shows the value of speed and efficiency at scale. Anthropic, xAI, DeepSeek, and others are pushing boundaries in their own ways.

The real innovation isn’t happening at the model level—it’s happening at the orchestration level. The companies and investors who understand this shift will be the ones who capture value in the next phase of the AI revolution.

The question isn’t which model will win. The question is: How will you orchestrate them?

Braxton Tulin is the founder of Savanti Investments, where he leads the development of QuantAI™ and SavantTrade™, next-generation platforms for algorithmic trading and quantitative finance. He writes about the intersection of artificial intelligence, finance, and exponential technology.