This article is for educational and informational purposes only and is not investment advice. Please consult with a qualified financial advisor before making any investment decisions.

In the quiet corridors of AI research labs and the bustling forums of open-source communities, a trillion-dollar conflict is brewing. On one side: the democratizing force of open-source AI, promising to put the power of artificial intelligence in everyone’s hands. On the other: the growing chorus of safety advocates, regulators, and risk-averse institutions warning that unfettered access to powerful AI could unleash catastrophic consequences.

This isn’t a theoretical debate. It’s a clash that will reshape the AI industry, redefine competitive moats, and create—or destroy—trillions in market value over the next decade. And if you’re an investor, entrepreneur, or business leader, understanding this conflict isn’t optional. It’s existential.

What’s Happening: The Open-Source AI Explosion

The open-source AI movement has reached an inflection point. What began as academic curiosity has evolved into a full-scale industrial revolution. Meta’s Llama models, Mistral AI’s efficient architectures, and China’s DeepSeek have proven that open-source models can rival—and in some cases surpass—their proprietary counterparts.

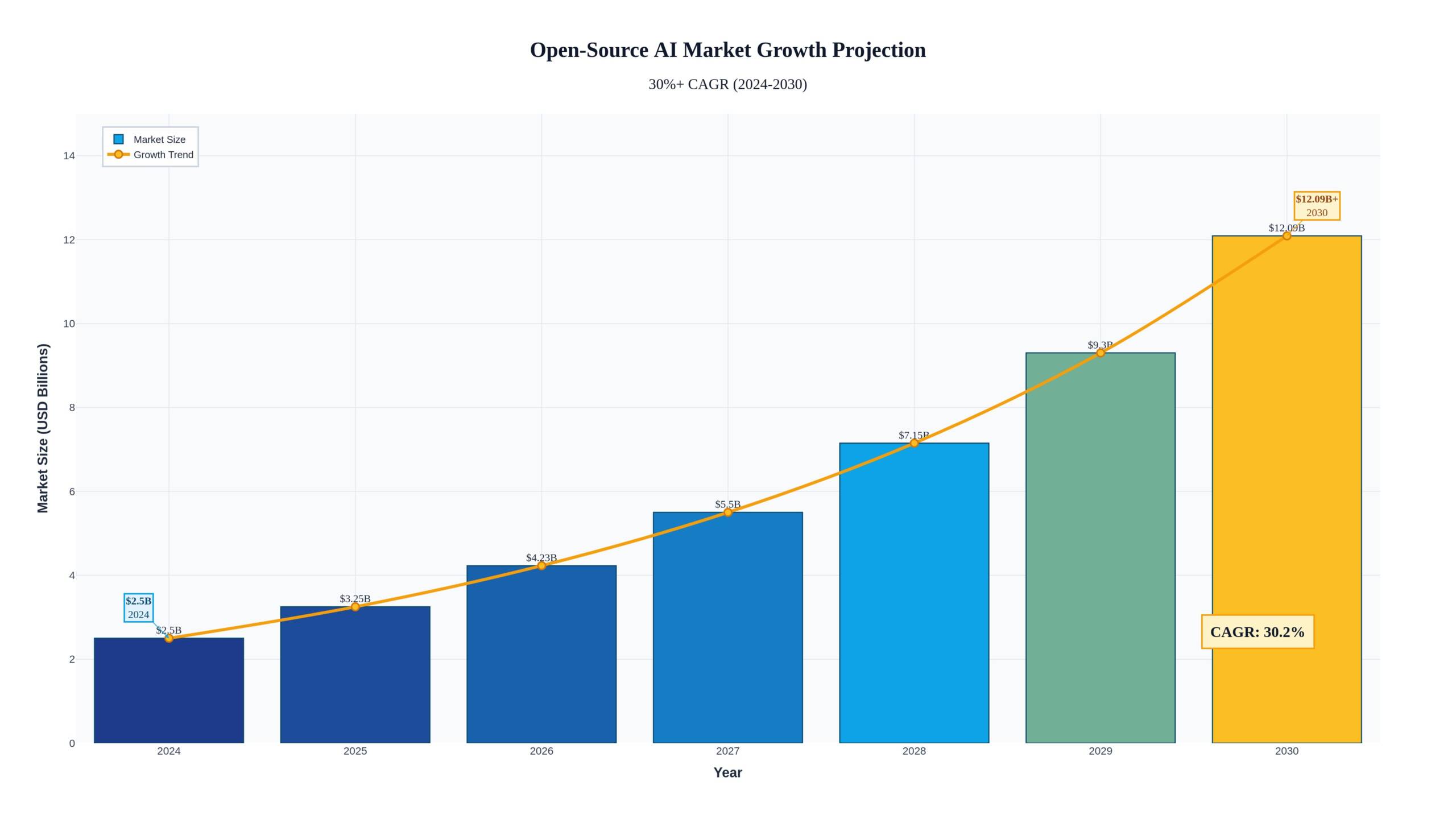

The numbers tell the story. The open-source AI market is projected to grow from $2.5 billion in 2024 to over $15 billion by 2030, representing a compound annual growth rate exceeding 30%. But these figures dramatically understate the true impact. When you factor in the downstream applications, infrastructure, and services built on open-source foundations, we’re talking about a multi-trillion-dollar ecosystem.

Meta’s decision to open-source its Llama family of models was a watershed moment. By February 2026, Llama 3 had been downloaded over 600 million times, spawning thousands of derivative applications across healthcare, finance, education, and beyond. Mistral AI, the French startup valued at $6 billion, has built its entire business model on open-source excellence, proving that “free” doesn’t mean unprofitable.

The technical barriers that once protected closed-source AI are crumbling. Training costs have plummeted—what cost $100 million in 2022 can now be achieved for under $5 million. Compute has been democratized through cloud providers and specialized AI infrastructure companies. The knowledge gap is closing as research papers are published within weeks of breakthroughs, and implementation details spread through GitHub repositories and Discord servers.

Why It Matters: The Safety Paradox

Here’s where the conflict intensifies. As open-source AI becomes more powerful and accessible, the potential for misuse grows exponentially. We’re not talking about hypothetical risks—we’re seeing real-world consequences today.

Consider the dual-use dilemma. The same language model that can help a researcher discover new antibiotics can also help a bad actor design novel bioweapons. The same computer vision system that enables autonomous vehicles can power surveillance states. The same generative AI that creates marketing content can produce deepfakes indistinguishable from reality.

The regulatory response has been swift and fragmented. The EU AI Act, which came into force in August 2024, takes a risk-based approach that places significant compliance burdens on “high-risk” AI systems. But here’s the catch: the Act struggles to regulate open-source models effectively. How do you hold a decentralized community of developers accountable? Who’s liable when an open-source model is fine-tuned for malicious purposes?

In the United States, the patchwork of state-level regulations is creating a compliance nightmare. California’s SB 1047 (though ultimately vetoed) sparked a national conversation about frontier model safety. Colorado’s AI Act focuses on algorithmic discrimination. Texas emphasizes transparency. Each jurisdiction is writing its own rulebook, and open-source developers are caught in the crossfire.

The investment implications are profound. Companies building on closed-source models from OpenAI, Anthropic, or Google can point to centralized governance, safety teams, and compliance frameworks. But what happens when your competitor builds the same application on an open-source model with a fraction of the cost and none of the licensing restrictions? This is the trillion-dollar question keeping venture capitalists and corporate strategists awake at night.

Expert Analysis: The Great Divergence

The AI community is deeply divided on this issue, and the fault lines reveal competing visions for the future of technology and society.

The Open-Source Advocates argue that transparency is the ultimate safety mechanism. Yann LeCun, Meta’s Chief AI Scientist, has been vocal: “Open-source AI is not just safer—it’s the only path to truly safe AI. When everyone can inspect the code, audit the training data, and understand the decision-making process, we create accountability that no closed system can match.”

This camp points to the history of software security. Linux, the open-source operating system, powers the majority of the world’s servers precisely because its transparency allows for rapid identification and patching of vulnerabilities. Why should AI be different?

They also emphasize the concentration risk of closed-source AI. If a handful of companies control the most powerful AI systems, we’re creating single points of failure—and potential abuse. Open-source distributes power, prevents monopolies, and ensures that AI benefits humanity broadly rather than enriching a select few.

The Safety-First Camp sees it differently. Dario Amodei, CEO of Anthropic, represents this perspective: “The difference between software and AI is that software does what you tell it to do. AI does what it thinks you want it to do. That unpredictability, combined with increasing capability, creates risks that transparency alone cannot mitigate.”

This group worries about the “race to the bottom” dynamic. If powerful AI capabilities are freely available, bad actors will inevitably exploit them. Unlike software vulnerabilities that can be patched, AI capabilities can’t be “unlearned” once they’re in the wild. The genie doesn’t go back in the bottle.

They advocate for a tiered approach: open-source for less capable models, strict controls and licensing for frontier systems that approach or exceed human-level performance in critical domains. Think of it like nuclear technology—we don’t open-source weapons-grade uranium enrichment, even if the underlying physics is well understood.

Real-World Implications: Follow the Money

This philosophical debate has very practical consequences for investors and businesses. Let me share what I’m seeing from my vantage point at Savanti Investments.

The Compliance Tech Boom: There’s a massive opportunity in AI governance and compliance tools. Companies like Credo AI, Arthur AI, and Fiddler are building the infrastructure to audit, monitor, and certify AI systems—whether open-source or proprietary. As regulations tighten, every enterprise deploying AI will need these tools. We’re talking about a market that could reach $50 billion by 2030.

At Savanti, we’ve integrated similar governance frameworks into our QuantAI™ platform. When you’re making real-time trading decisions with AI, you need bulletproof audit trails, explainability, and risk controls. The same principles apply across industries.

The Infrastructure Play: The open-source AI boom is driving massive demand for specialized infrastructure. Companies providing secure compute environments, federated learning platforms, and privacy-preserving AI tools are seeing explosive growth. Hugging Face, valued at $4.5 billion, has become the GitHub of AI—and they’re just getting started.

The Licensing Innovation: We’re seeing creative new business models emerge. Mistral AI offers both open-source models and premium, safety-enhanced versions with enterprise support and indemnification. It’s the “open core” model applied to AI, and it’s working. Their revenue run rate exceeded $100 million in 2025, less than two years after founding.

The Insurance Market: AI liability insurance is becoming a thing. As companies deploy AI systems—especially open-source ones they’ve customized—they need protection against potential harms. Insurers are developing new products, and the premiums reflect the perceived risks. Expect this to become a multi-billion-dollar market.

The Talent War: Companies that can navigate both the open-source ecosystem and the regulatory landscape have a massive competitive advantage. We’re seeing salaries for AI safety engineers and compliance specialists skyrocket. At Savanti, we’ve built a team that understands both the technical and regulatory dimensions—it’s not optional anymore.

The Risks: What Could Go Wrong

Let’s be clear-eyed about the downside scenarios, because they’re not trivial.

Regulatory Crackdown: Governments could decide that the risks of open-source AI outweigh the benefits and impose draconian restrictions. This could stifle innovation, drive development underground, or create a two-tiered system where only large, well-resourced organizations can participate. The economic cost would be measured in trillions of lost productivity and innovation.

Catastrophic Accident: A powerful open-source model could be misused in ways that cause significant harm—a bioweapon designed with AI assistance, a financial system crash triggered by algorithmic manipulation, or a disinformation campaign that destabilizes democracies. The backlash would be severe and potentially set the entire AI industry back years.

Liability Cascade: As AI systems become more autonomous and consequential, the question of liability becomes critical. If an open-source model causes harm, who’s responsible? The original developers? The company that fine-tuned it? The end user? The legal uncertainty is a massive risk for anyone building on open-source foundations.

Competitive Disadvantage: Companies that invest heavily in safety and compliance may find themselves outcompeted by rivals who cut corners with open-source models. This creates a race-to-the-bottom dynamic that could undermine the entire safety ecosystem.

Future Outlook: The Path Forward

So where does this conflict lead? Based on my analysis and conversations with leaders across the AI ecosystem, I see three plausible scenarios over the next 3-5 years.

Scenario 1: Regulatory Convergence (40% probability)

Governments and industry coalesce around a pragmatic framework that allows open-source innovation for less capable models while imposing licensing and safety requirements for frontier systems. Think of it like pharmaceuticals: you can buy aspirin over the counter, but you need a prescription for opioids. This would create a stable, predictable environment for investment and innovation.

Scenario 2: Fragmentation (35% probability)

The regulatory landscape remains balkanized, with different jurisdictions taking radically different approaches. The EU goes strict, the US stays permissive, China pursues sovereign AI, and emerging markets become havens for unrestricted development. This creates complexity and compliance costs but also arbitrage opportunities for nimble players.

Scenario 3: Safety Crisis (25% probability)

A high-profile incident involving open-source AI triggers a global crackdown. Regulations become restrictive, innovation slows, and the industry consolidates around a few large, heavily regulated players. This would be painful in the short term but might be necessary to build long-term trust and sustainability.

My bet? We’ll see elements of all three, with Scenario 1 gradually emerging as the dominant paradigm by 2028-2029. The key will be developing technical solutions—like watermarking, capability controls, and federated governance—that allow us to have our cake and eat it too: the innovation benefits of open-source with the safety assurances that society demands.

At Savanti Investments, we’re positioning our portfolio for this future. We’re investing in companies that bridge the divide: open-source-friendly but safety-conscious, innovative but compliant, accessible but responsible. Our SavantTrade™ platform, for instance, leverages open-source AI components where appropriate but wraps them in proprietary risk management and governance layers.

Conclusion: The Trillion-Dollar Opportunity

The conflict between AI safety and open-source isn’t going away—it’s intensifying. But conflicts create opportunities for those who understand the dynamics and position themselves strategically.

The winners in this new landscape will be the companies and investors who recognize that safety and innovation aren’t opposing forces—they’re complementary. The most valuable AI companies of the next decade won’t be the ones with the most powerful models or the most permissive licenses. They’ll be the ones that earn trust by demonstrating that they can deliver cutting-edge capabilities while managing risks responsibly.

This is the future we’re building at Savanti Investments. Not AI for AI’s sake, but AI that creates value sustainably, ethically, and profitably. The trillion-dollar conflict isn’t a problem to be solved—it’s a market to be understood and navigated.

The question isn’t whether you’ll be affected by this conflict. You will be. The question is whether you’ll be prepared.